‘Doing higher than people’: dwelling care suppliers redefine AI threat, belief and reliability

This text is a part of your HHCN+ membership

On the stage and within the hallways of our Capital+Technique convention this week in Charlotte, AI was on everybody’s lips – however not within the methods I’ve heard earlier than.

AI has already developed from a theoretical future to an actionable path. However conversations have shifted from concern of AI dangers to acceptance of AI and even confidence in AI as a device to scale back threat. Greater than I’ve heard earlier than, suppliers agree that AI is extra dependable than people in lots of areas.

The strain got here after I heard some suppliers highlighting the ethical, relationship and belief points surrounding AI. Opinions different on whether or not AI must be utilized in a extra human-centric context and on how medical doctors, healthcare suppliers, shoppers and sufferers would reply to its use. These conversations are extra vital than ever as AI is heralded as the reply to the trade’s workforce and effectivity challenges.

On this week’s unique members-only HHCN+ Replace, I summarize my key AI-focused insights from Capital+Technique, with evaluation and key insights, together with:

– Why suppliers’ confidence in AI is growing and what meaning for enterprise operations

– The ethical AI debate

– The place the pursuits of healthcare suppliers and medical doctors lie

The fast evolution

I typically take into consideration dangers after I discuss AI. I’ve performed round with a number of the commonest hallucination-inducing prompts for AI instruments, and you may ship the AI right into a tailspin by asking it to generate a seahorse emoji. After I take into consideration the appliance of AI in healthcare, these examples come to thoughts. And suppliers are conscious of the dangers, with some establishing AI-specific insurance policies to make sure compliance.

At Capital+Technique, I observed that fewer suppliers centered on threat in AI discussions. Suppliers had been as an alternative centered on how shortly AI has develop into considerably extra highly effective, offering a stage of reliability that people can’t replicate.

In keeping with David Bell, the founder and CEO of Grandcare Well being, it’s troublesome to embrace this fast enchancment as a result of folks typically wrestle to “[understand] exponential progress.” The methods out there a yr in the past had been about one-tenth nearly as good as these out there now, he stated.

“We will already use these methods to keep away from the silly little silly paper cuts that make our lives depressing all day lengthy. However yearly will probably be in a position to do greater and better issues,” Bell stated on a panel.

Different leaders made related feedback.

“We see AI with the ability to reply incoming calls and signify your small business to a buyer for the very first time,” stated Jeff Salter, founder and CEO of Caring Senior Service. “The issues we see are that they do it higher than people. They perceive a shopper’s wants higher, and I feel most individuals on this room a yr in the past would have stated, ‘That is by no means going to get replaced.’ And we see that issues are getting higher. There are only a lot of choices that aren’t simply again workplace, however are actually virtually thought-about entrance workplace.”

Nonetheless, suppliers must be prepared in case one thing goes unsuitable, Salter stated. He really helpful suppliers rent a PR agency as quickly as they begin utilizing AI so they’re ready for any AI missteps.

Ralph Laughton, the CEO and head of strategic partnerships at Coronary heart, Physique & Thoughts Dwelling Care, stated he has made a 180 diploma activate AI up to now yr, and has now embraced the know-how and is ‘all in’. Nonetheless, he outlined one of many dangers he is involved about.

“If we use a listening machine in somebody’s dwelling at evening… are we taking accountability for ensuring we reply if one thing occurs in a single day, and if it would not, is that an issue for us?” Laughton requested. “I would not wish to tackle any extra threat, or I’d wish to do one thing that limits publicity to it. So perhaps an exemption.”

After this occasion, it’s clear to me that Salter is correct in regards to the altering notion of AI suppliers. Suppliers now see AI as a device to mitigate threat in sure eventualities, relatively than as a questionable leap into unknown know-how.

Belief AI

However whereas the final enthusiasm for AI was palpable, disagreements nonetheless led to some debates. Suppliers all agreed that dwelling care is a folks enterprise and that AI is a vital a part of defending the sustainability of the enterprise – however divisions emerged over how AI faucets into the human ingredient.

In my private life, I now expertise far much less concern a few “2001: A Area Odyssey”-esque robotic takeover try than I did simply two or three years in the past. And analysis reveals that individuals are more and more selecting AI, even with regards to their well being.

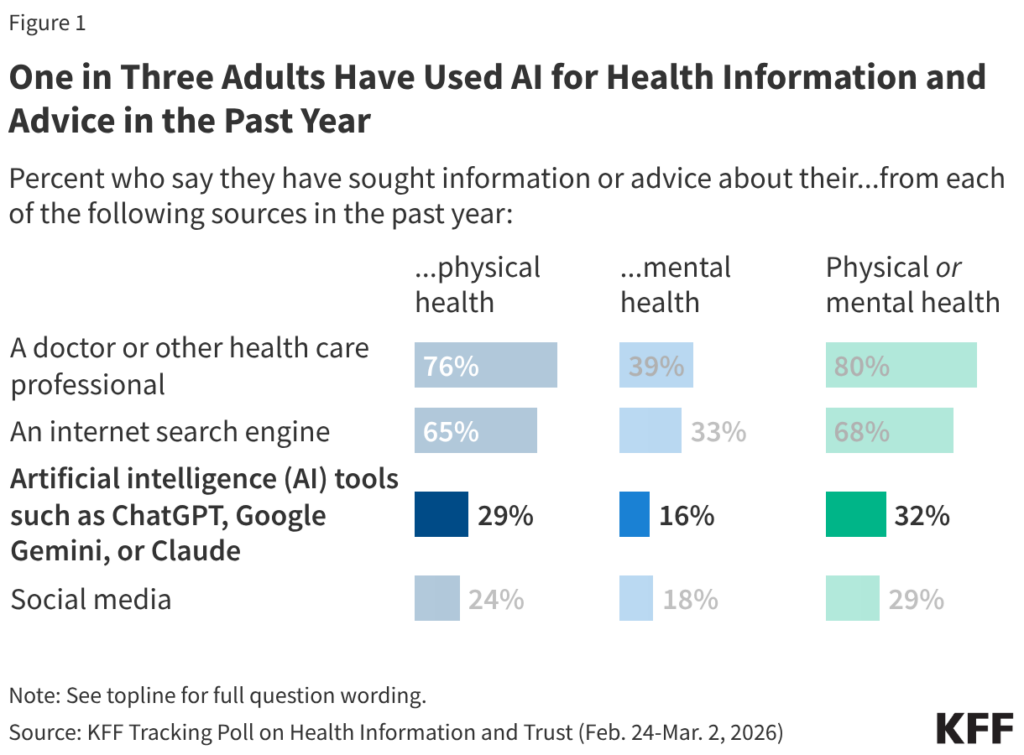

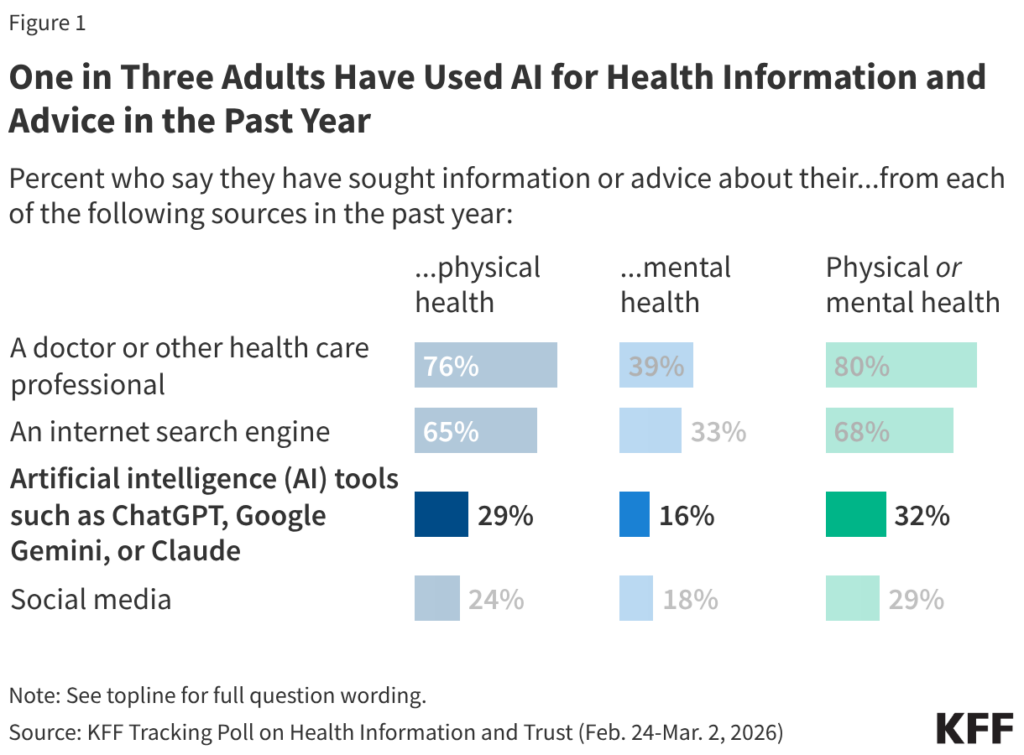

New analysis revealed Wednesday by KFF reveals that a few third of adults use AI for well being info and recommendation. That is enormous – it reveals that the superior AI instruments for healthcare, which is able to doubtless be a part of the AI transformation of the house care trade, have assist from a big a part of the inhabitants.

Apparently, the identical survey discovered that 77% of the general public expressed issues about information privateness when sharing private medical info offered to AI instruments – however 41% of those that have used AI for bodily or psychological well being info and recommendation say they’ve uploaded private medical info to an AI device or chatbot.

So issues in regards to the “Area Odyssey” might not have been utterly addressed, however they don’t seem to be stopping a big phase of the inhabitants from accepting the function of AI of their well being journey. However the rigidity between growing use of AI amid ongoing information privateness issues was additionally evident at Capital+Technique, particularly in suppliers’ ethical dilemma over the extent to which they need to use AI to speak with shoppers or sufferers, or suppliers and physicians.

“That is only a concern I’ve about the place we’re going,” Bob Roth, managing accomplice of Cypress Dwelling Care, stated on a panel. “As a result of we’re making an attempt to do extra with much less, and lots of people are utilizing machines and AI to speak with our healthcare suppliers, and I do not suppose that is the appropriate path for us to take. We have to construct relationships.”

Different folks I spoke to identified that AI serves as an equalizer for the folks behind dwelling care. Salter stated he was satisfied to make use of AI well being care screening instruments after listening to this story:

“A younger girl obtained a name from an AI agent who was screening her for a job interview,” says Salter. “Her impression was that she actually appreciated the AI interplay, and that was as a result of she suffered from a lisp and in her regular interactions with folks she was instantly excluded from a job. Extremely certified, however instantly discounted. So if AI can do this sort of factor, take a person and overcome the biases, [that is] win.”

Not all healthcare suppliers might be enthusiastic about AI, in line with Megan Casey, senior vice chairman of Stay Effectively Dwelling Care.

“For those who had requested me final yr if know-how and AI have a spot amongst healthcare suppliers and shoppers, I’d have stated, should you can educate a pc to have empathy, name me,” Casey stated. “After which somebody referred to as my bluff and referred to as me again and stated, ‘Effectively, this pc has empathy, has realized empathy.’ Healthcare suppliers don’t desire realized empathy. They do not wish to speak to a pc. They wish to speak to an actual one who understands them, who remembers that it is their birthday, or that their youngster is preparing to return to highschool, or that they are afraid of huge canine, or that they hate people who smoke.”

I’ve not discovered any particular information on how healthcare suppliers and physicians really feel about AI implementation, however such info will undoubtedly develop into out there, and I’m considering listening to from healthcare suppliers who’ve surveyed their very own workers about the usage of AI in supporting healthcare suppliers. My conclusion from Capital+Technique is that, on the very least, suppliers ought to over-communicate about implementing inside AI instruments and be keen to lift their workers’ issues.

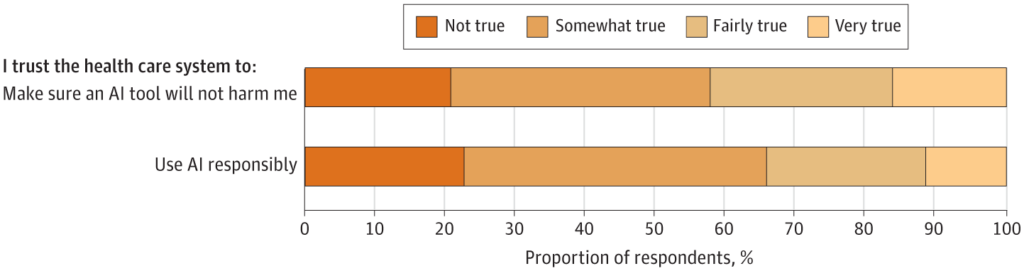

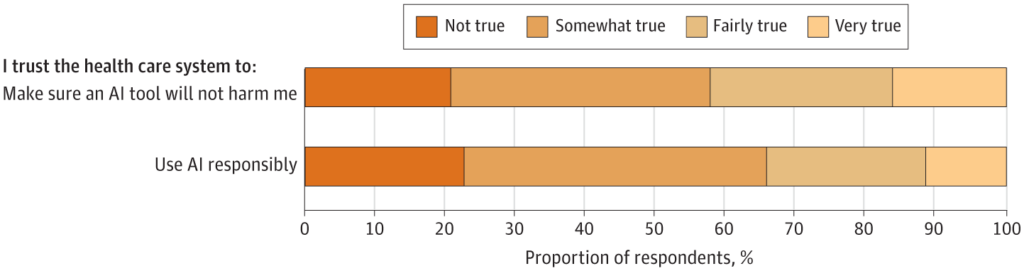

Relating to whether or not a affected person will belief the AI agent or their dwelling care supplier, the story is a bit murky. A examine revealed in JAMA (in February 2025, so maintain a grain of salt that folks’s impressions might have modified) discovered that a lot of the respondents – 65.8% – had little confidence of their healthcare system to make use of AI responsibly, and most – 57.7% – additionally reported little confidence that their healthcare system would maintain an AI device from harming them.

So whilst Capital+Technique suppliers expressed growing confidence in AI, the findings recommend they need to nonetheless train warning when launching patient-facing AI instruments, and make an effort to elucidate why AI instruments in use could be trusted to guard delicate affected person information.

My newest notable AI expertise from Capital+Technique isn’t any shock to suppliers: the ocean of AI instruments is deep and crowded. As operators grapple with tips on how to deal with AI, they need to make sure the instruments they use clear up an issue they really should keep away from wasted funding and the potential to undermine the belief of healthcare suppliers or shoppers.

“You must outline the issue earlier than you discover the answer,” says Sherry Kesler, vice chairman of post-acute care providers at West Virginia College Well being. “Many individuals wish to go for the AI answer earlier than they even know what their drawback is, after which they select the unsuitable answer after which do not combine it into their present workflows.”